I have been musing about some threads relating to data for a while and I've noticed they have been tangling people up.These threads resonated at the recent Spatial Sciences Institute (SSI) Conference held in Melbourne, Sept.12th-16th.I want to unravel a few threads in light of that conference.

It has struck me for quite a while how crazy things are in our industry.Let me give an analogy that describes, until recently the bind in which we found ourselves.Your hi-fi system dies.Off to the hi-fi shop you go and, before you know it, you are walking out of the shop with a system that would take someone with a Ph.D.to use! Needless to say, your wallet is very much lighter.Your new system, you tell your friends, is the best - it has features, features and more features.Then the awful truth dawns on you: you can't afford the music CDs!

I have heard lots and lots of people tell me how they have the biggest and best GIS around.(What my previous employer's CEO called a "Rolls-Royce GIS.") But then they go on to bemoan the fact that they can neither access nor afford the data.

In the Australian environment the question of data access and pricing is a perennial one.So it was no surprise that it was talked about constantly at the recent GITA Australia (reviewed here) and SSI conferences.

Stuart Nixon: The "Where" of Data

Stuart Nixon, CEO of ERMapper (based in Perth, Western Australia,) gave a keynote presentation on the first day of the SSI conference.He provided a marketing/financial view on what, in his view, is holding back the geospatial industry.

Nixon explained how he thought we were good at capturing data; pretty good at managing it (though I beg to differ there); but pretty awful at delivery.By "delivery," he wasn't referring to its technical aspects per se, rather he meant the way in which we put our services in the hands of the masses.In his view the latter needs to change, and change quickly.To create a framework for discussing it, he characterized the spatial market as being:

- Small but expert;

- Something that, when compared to search engines, is not used by everyone;

- Restricted by business and data copyright models;

- Characterized by poorly integrated data; and

- Distinguished by having most of its data locked up.

"What" and "Where"

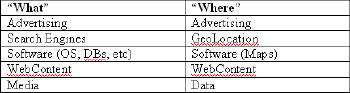

To illustrate, Nixon compared the value of the "What" industry (i.e. search engines like Google, MSN, Yahoo!, etc.) to the "Where" industry (us).The "What" industry, in his view, generates money, whereas the "Where" industry spends it.The "What" industry does this by giving access to information about things into the hands of anyone.This is the value of "What" - accessibility.Nixon presented a diagram that he used to compare the two industries.

|

A Way Forward?

Nixon thinks there is a way forward but first three missing things need to be fixed.

1.We can't locate (geocode) things. Geocoding for Nixon is the equivalent of a Google "search." He thinks there is no unified approach and that it is costly (currently about one cent per transaction).Nixon stated that the industry must stop seeing geocoding as the business model but rather as the enabling technology for value-added services that anyone can use.Part of the issue, Nixon believes, is the lack of free street databases on which to base geocoding.Putting Money Where Mouth Is!

I take a somewhat different view that Nixon on this. I simply don't believe that geocoding is a geospatial process.My view is that it is a semantic matching problem in which two fuzzy sentences (addresses) are matched for the purpose of transferring two attributes: a latitude and a longitude.Yes, geocoding requires some geoprocessing to create a matchable dataset (from multiple spatial sources) but it does not need a GIS to execute a geocode operation.

2.Current data licensing models and access modes are too restrictive. Nixon highlighted the dilemma when asked the rhetorical question: Why is our satellite imagery rotting in tape libraries when it should be getting used? He also underlined his passion on this topic when he proclaimed that there is better, free, detailed coverage of Mars than of Earth! Sure, Australia has some large datasets, but current license and access agreements are killing access.

Part of the problem is that the major suppliers of data are the government agencies.Some of the data are free but some are not.The problem is compounded by the fact that much of the data (e.g. topographic data) was captured in the days before economic rationalism saw governments retreat from major infrastructure projects like mapping.

This is not an issue that is isolated to Australia. In my view some of the current owners of navigable road datasets will face this dilemma if they continue to price themselves out of Internet portal use.The restrictions on use of road data inside Google Maps are not Google's fault.It is the data licensing that is the issue: this licensing runs counter to the portal's approach of ubiquitous access.If this becomes a problem, Google and the other location enabled portals just might go elsewhere (or capture it themselves).

I think new technologies like LiDAR (Light Detection And Ranging, discussed below and defined here) have the potential to change this. Much more on this in Part 2 of this article, which will appear next week.

3.Current data delivery mechanisms are poor.Those of us who thought CD and DVD technologies were a boon to data delivery can think again.Now even these are not good enough.In the Internet age, the main method of delivery should be via the Web (for those who can afford the bandwidth).

Nixon drove home his point that "usage equates to value" when he made a special announcement that ERMapper, in conjunction with the Australian Greenhouse Office and GeoScience Australia is sponsoring a new web site to enable greater access to large geospatial datasets.

Before Nixon made the announcement, he noted that BitTorrent traffic takes up to 35% of today's Internet traffic and is, therefore, the delivery mechanism of choice for Internet data delivery.The new site Nixon announced is called www.geoTorrent.org.

The technological vision and driver for the new site is one of ERMapper's staff, Richard Orchard.It uses BitTorrent technology to improve user access and delivery, thereby hoping to increase usage and value.It is not meant for online access or searching, rather simply for data delivery.The great thing about this site is that the last 30 years of Landsat imagery for Australia is now available at one access point! Perhaps not "killer data," but it is a great start that will hopefully cause others to open their data vaults to the public.

Too late?

But is this a late response from an industry caught with its pants down by Google? Is it already game set and match to Google?

Nearly.An example may help clarify why I think this might be the case. In my home state, Tasmania, there has been a ground swell of demand for high resolution imagery for use in the private and public sectors. Local government (of which there are 29 councils in Tasmania), fire, police, ambulance, parks and other state government agencies, forestry companies, agribusinesses, etc., all want this data for their many needs.None of them, as individuals, can afford to buy and process imagery from the main suppliers to create a single seamless mosaic over their operational areas and keep it up-to-date.But, as a single body, they could afford such imagery.

The problem is that the main agency for coordinating data capture and access within the state, The LIST, has not been responsive to this demand.It has not gathered the many players together to ascertain need (coverage, scale, sensors, time scales), draw up common specifications, negotiate with suppliers, and then coordinate the processing of the product to make it available to all.Something might be happening as I write, but this is a reactive response rather than proactive service provision.

At a recent local Tasmanian conference, I publicly asked the question: "Do we have to wait until Google buys all the DigitalGlobe data for Tasmania, processes it and makes it available in Google Maps/Google Earth before we can finally get the data we need?" Someone in the audience yelled out: "Too right, mate!" If my listener's response is any measure, the decision has been made: we will wait for Google to do what we seem incapable of doing ourselves!

What Google has done in this space can be likened to "killer data." In my Killer Apps article published last week, I used the term in the following sense (from Wikipedia): "The term is especially used when the technology existed before but did not take off before the introduction of the killer application." We know that large data stores existed before Google Maps and Google Earth, but these were not as accessible or useful (raw data for download is not what most people want).Thus, it seems to me that the only real data integration Web Service that has any traction in the market today is Google's KML (reviewed here). One attendee at the SSI conference informed me that Google does have a contract with DigitalGlobe for their data and is buying up an incredible amount of it.

But with Google, geospatial data's presence has become visible and so is now "taking off," as it were.Google knows data is everything in the geospatial business.One attendee at the SSI conference informed me that Google has a contract with DigitalGlobe for their data and is buying an incredible amount.This data will be made accessible via its platform interfaces (Maps API and KML).Perhaps it is these interfaces that will open up data access far more than the ones the geospatial industry has been pushing for quite a while now.

In summary, let's keep it simple.If I could provide my users a Google Maps layer within GIS application(s) in the same way we access WMS services, then it really is close to game, set and match! Tasmanian users will get what they want: seamless, statewide coverage of up-to-date high resolution imagery.And, just in case Google is listening, they would be willing to pay!

[Part 2 will appear next week.]